Recently, I was working on a project, where we had to gather some information. Considering, this is going to be a minor task and executed only few times a day, server-less technology was the perfect choice for this scenario.

With more than 200 services in Azure, it is important to chose the best fit for this scenario. I could have easily built a ASP.NET MVC app with SQL Server back-end, but it will be a continuous running virtual machine and I’ll need to pay for it for entire month. Hence, for back-end, I decided to use Azure Table Storage as it will be a flat, single table structure. However, for front end, I was in a fix. Should I go for Azure Logic Apps or should I write a Azure Function? Considering there are already lot of articles like this and this and many more, I thought Azure Function will be a great choice. But then, I don’t want to touch JavaScript anymore :) So, I decided to take a different approach.

The Front-end: Microsoft Forms

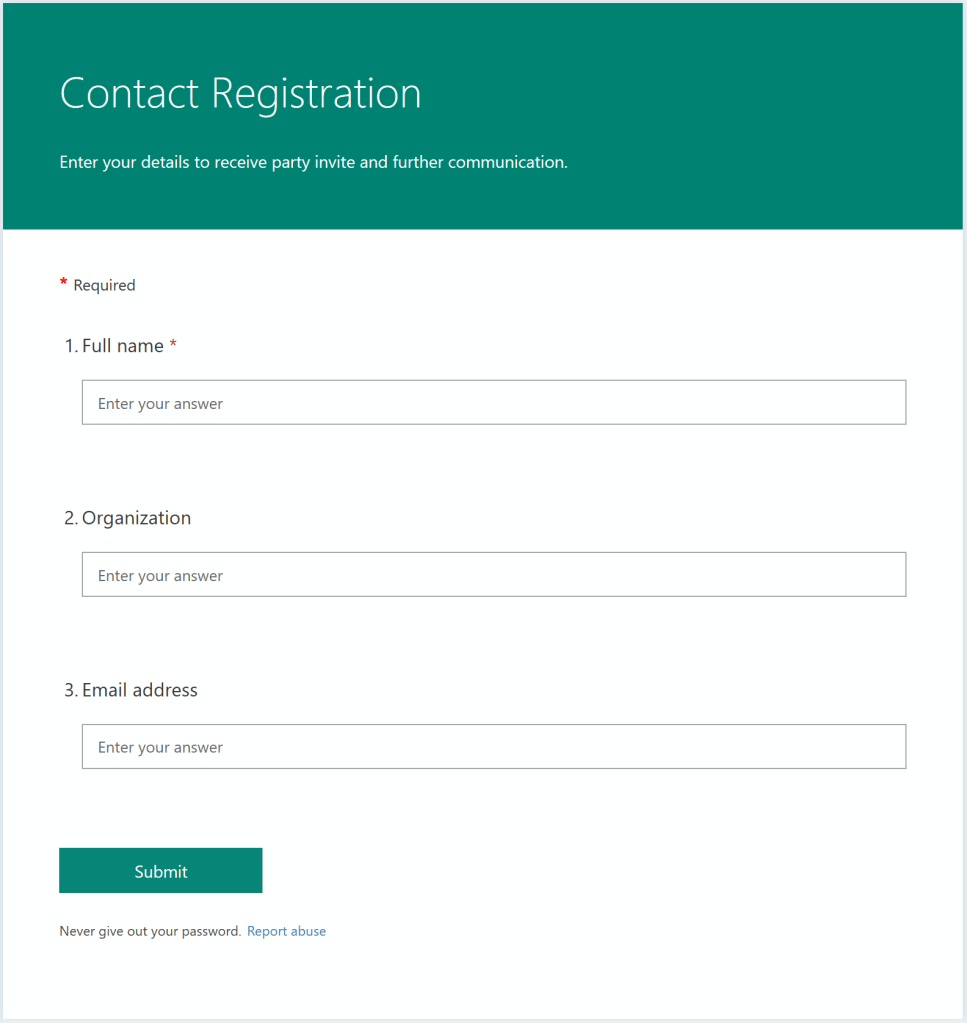

Rather than writing HTML & JavaScript for this simple page, I thought about using technology which is designed for this purpose: Microsoft Forms. I created a Form and designed it to accept details from users (including people outside my organization).

The Back-end: Azure Table Storage

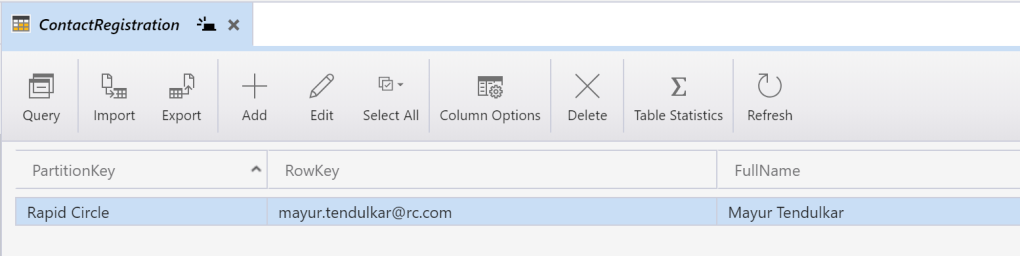

As mentioned earlier, for storing details, I’m using Azure Table Storage and this is Microsoft Azure Storage Explorer view of it. You can see I’m using Organization as PartitionKey and Email ID as RowKey. This will help to store and manage data easily.

The Compute: Azure Logic Apps

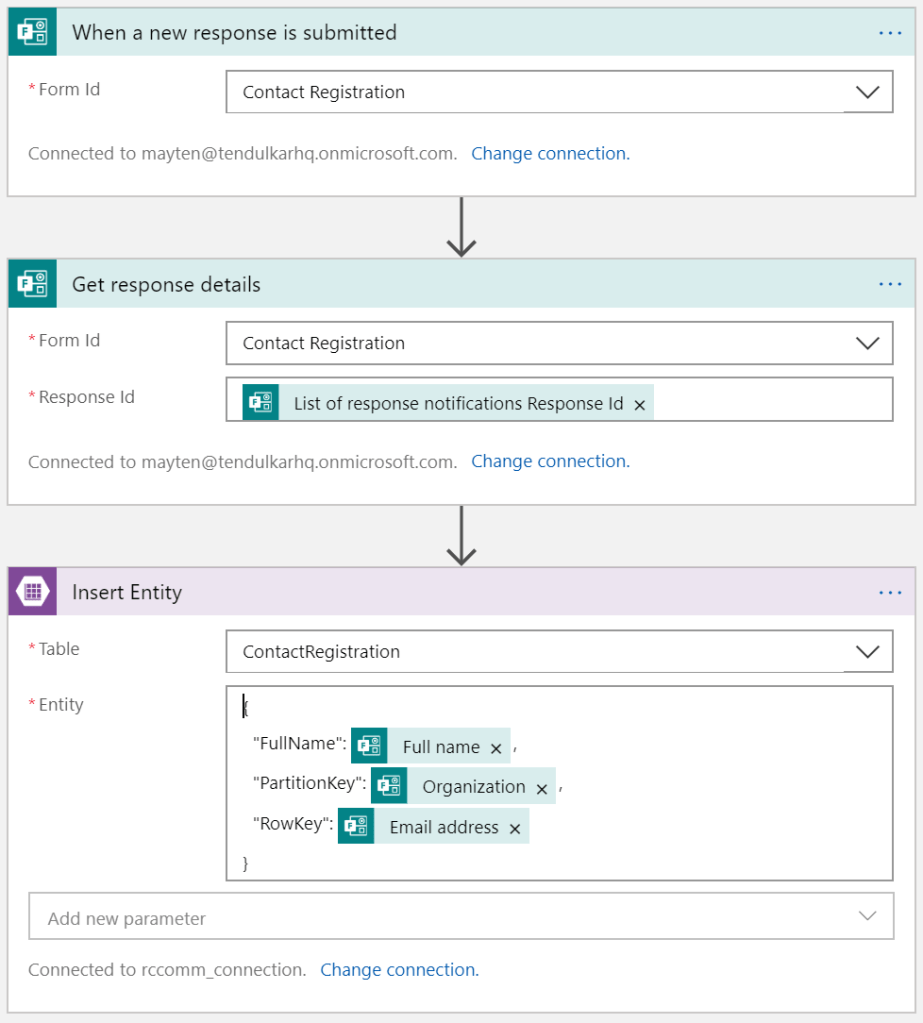

The last bit of this app is to get data from Microsoft Forms and pull it into Azure Table Storage for further utilization. Luckily, there is Azure Logic Apps connector available for Microsoft Forms. However, the trigger used here is available only if you sign in with Work/School or Office 365 account. I hope, this trigger will be made available for normal Microsoft account based Microsoft Forms as well.

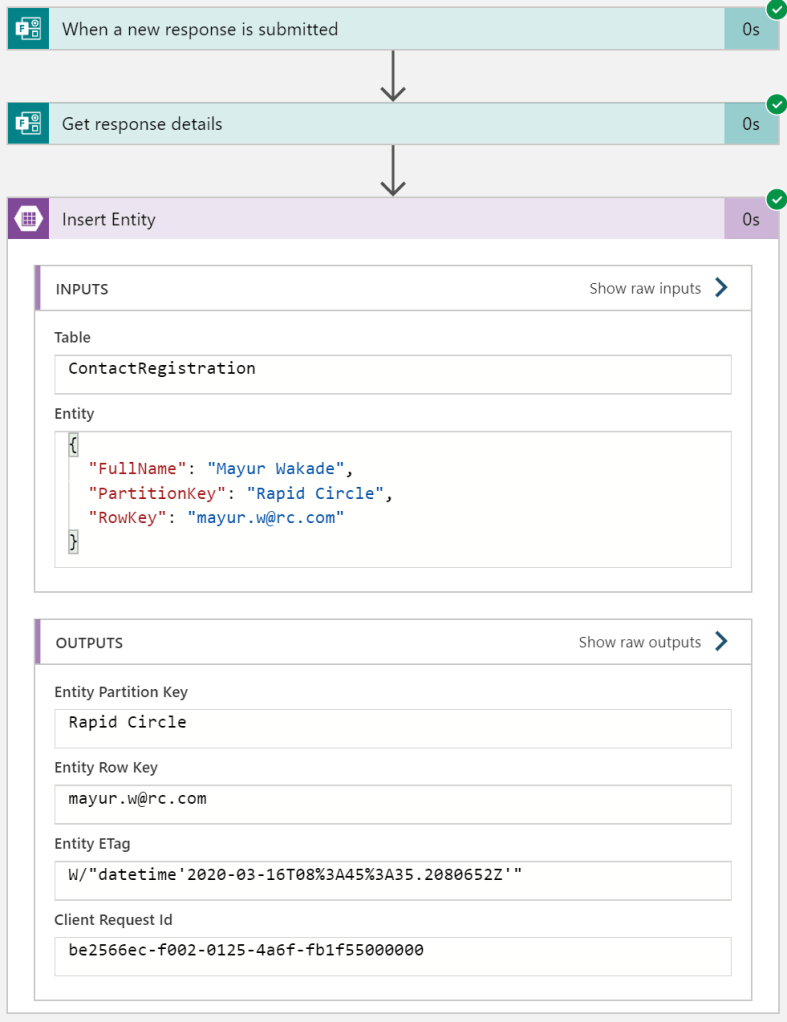

Just save this Azure Logic App and it will execute whenever there will be a new entry in the Microsoft Form. You can see the details about this entry and execution details on Azure Portal.

Conclusion:

Azure Logic Apps with Microsoft Forms makes it super easy to build UI where we have to gather quick inputs from user. For example: registration forms, contact forms, invite for a party etc. Further, using Logic Apps, you can send automated emails, auto generate code for RSVP etc as and when required. This is perfect low-code (or shall I say no-code) solution